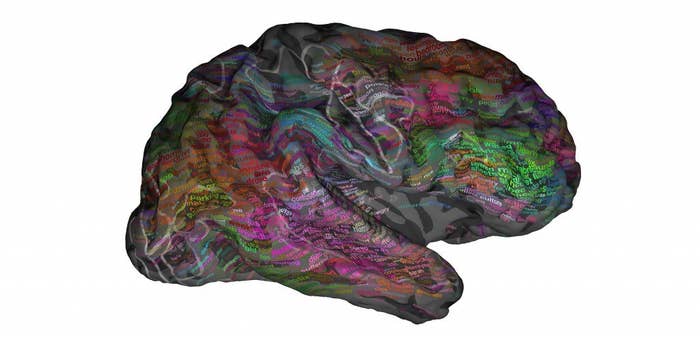

Scientists have used brain scanning techniques to create a "map" showing where words are encoded in our brains.

The team, working at the University of California in Berkeley, used "functional magnetic resonance imaging" (fMRI) scanners to study blood flow in the brains of seven volunteers as they listened to two hours of the Moth Radio Hour podcast. They found that as the subjects heard certain words, activity in parts of their brains grew stronger. And they were able to predict which parts of the brain would light up when certain types of words were heard.

The study was published today in Nature magazine.

And interestingly, that map seemed to be pretty much the same in all seven subjects they looked at.

The particular areas that lit up tended to be the same in all the volunteers. "We’re finding places in the brain where certain words or phrases are likely to elicit a response, consistently," Dr Alex Huth, one of the Berkeley researchers, told BuzzFeed News. "You could say that's where they're encoded."

"It’s an impressive work," Dr Barry Devereux, a neurolinguist from Cambridge University's Centre for Speech, Language and the Brain who was not involved in the study, told BuzzFeed News. "It uses a lot of information about the speech, and relates it to the fMRI data in a rich and complete way."

The map breaks down by concept. For instance, words referring to people and social interaction tended to appear together.

The study divided words up into 12 categories: visual, tactile, numeric, locational, abstract, temporal, professional, violent, communal, mental, emotional and social. They found that words in the more human, social categories (such as "social", "violent", "emotional", or "communal") tended to be grouped together. Similarly, words in categories to do with perceptions, numbers, and places were also grouped.

This parcelling up of words into areas is slightly surprising, Devereux said. "There's been a lot of debate about the idea that there are different regions in the brain for different semantic ideas. It's controversial." The alternative possibility is that semantic information is distributed more evenly around the brain, he said, but this study suggests it's not.

So far the study has only looked at a small number of subjects, all of them Western and educated, but the researchers hope its findings are universal to humans.

"I strongly suspect that it’s universal," said Huth. "The things we see are very universal human things. The separation of words describing people and interactions from words describing visual or tactile properties, I think that’s a very natural distinction. I would think that any neurotypical person would have that distinction.”

Devereux agreed: "We’d hope that these results would generalise."

Some words were represented in more than one brain area.

"One of the cool things," Huth said, "is that we're not seeing a brain area for a word. We’re seeing each brain area representing a bunch of different words, and each word in a bunch of areas."

So as the video above explains, the word "top" stimulates a small area in a part of the brain associated with clothing and appearances. But it also activates another, associated with buildings and places, and another, associated with numbers and measurements.

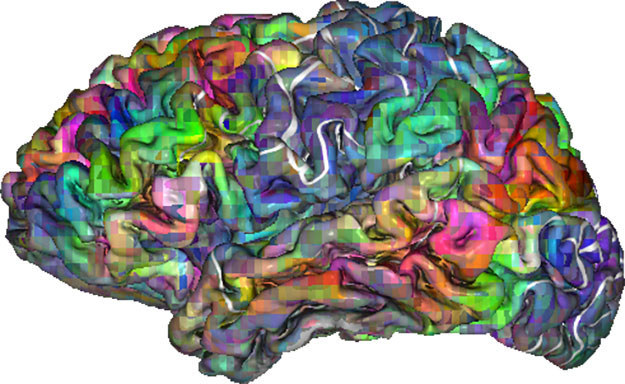

The researchers were also surprised by how much of the brain was involved in language.

"One of the most interesting aspects that we take away from these results," said Huth, "is that the amount of brain that’s involved in understanding language is massive." About 20–30% of the cortex, the complex outer layer of the brain, which is uniquely large in humans, is activated at some point or another by speech and language, he said. "And it’s all these areas that are not traditionally thought of as being involved in language."

They also found that language processing goes on roughly equally on both sides of the brain.

Previous studies have suggested that most of the brain's language activity goes on in the left hemisphere of the brain. This study found it was "relatively symmetrical" across both hemispheres. The authors suggest it may be that those earlier studies tended to look at shorter words and phrases, and that long narratives such as the one they played their subjects involve the right side more.

It could pave the way for "mind-reading" devices that could give a voice to people who have lost the ability to speak, although that is a long way off.

"It’s a really good step along the path to being able to decode what someone is hearing from their brain activity," said Huth. "And maybe that’s close enough to what people are thinking to decode thoughts that way." He thinks people with locked-in syndrome or motor neurone disease – the condition Stephen Hawking suffers from – might benefit in future, "but that's a few more stops along the road". At the moment, he said, "lying in an MRI machine every time you want to talk isn’t exactly practical".

Devereux agreed: "I think it’s quite far down the line. What they've done requires over two hours of listening and over 10,000 words of speech. With enough data you could make those sorts of inferences, but the amount of data you'd need would be huge."

Before that, Huth said, an interesting next step would be to look at atypical groups to see if they’re different – for instance, seeing whether the social aspect is less clear in people with autistic spectrum disorder.