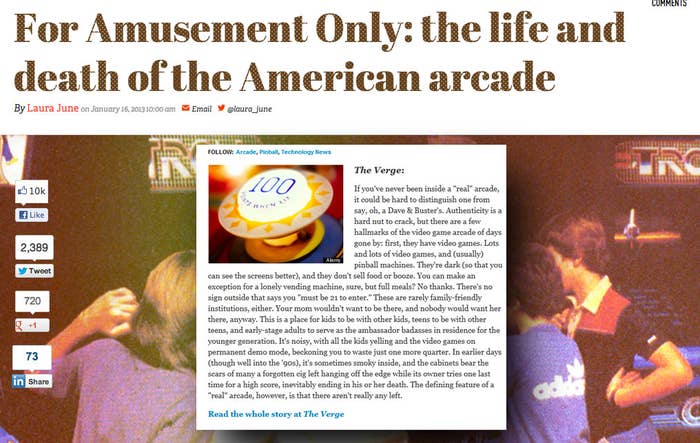

On Jan. 16, The Verge published a story about the past and current state of the American arcade industry. It was nearly 8,000 words long, beautifully laid out, and accompanied by a TV-quality video documentary.

On the 21st, The Huffington Post published a short post with a link to the story. It contained only a snippet of intro text, taken directly from the original post. Its headline was almost identical: "The Life And Death Of The American Arcade" to The Verge's "For Amusement Only: The life and death of the American arcade." Yet, after the 21st, if you tried to search for this story using any number of reasonable queries, including "life and death of the american arcade," the first result on Google was the Huffington Post version. The Verge's version didn't even crack the first page in Google News.

The Verge's editor-in-chief was understandably upset by this, and appealed to The Huffington Post to take down its post:

The Verge writes great stuff for HuffPo. RT @laura_june really awesome scraper site http://t.co/mDsrgqdz

The Verge writes great stuff for HuffPo. RT @laura_june really awesome scraper site http://t.co/mDsrgqdz-- Joshua Topolsky

The Verge writes great stuff for HuffPo. RT @laura_june really awesome scraper site http://t.co/mDsrgqdz-- Joshua Topolsky

Formal public request. @bbosker and @HuffingtonPost, please remove the content you've scraped from us. http://t.co/mDsrgqdz Seriously.

Formal public request. @bbosker and @HuffingtonPost, please remove the content you've scraped from us. http://t.co/mDsrgqdz Seriously.-- Joshua Topolsky

Formal public request. @bbosker and @HuffingtonPost, please remove the content you've scraped from us. http://t.co/mDsrgqdz Seriously.-- Joshua Topolsky

What's most egregious about this @HuffingtonPost scrape is its theft of our SEO on title and text. Google "death of the american arcade"

What's most egregious about this @HuffingtonPost scrape is its theft of our SEO on title and text. Google "death of the american arcade"-- Joshua Topolsky

What's most egregious about this @HuffingtonPost scrape is its theft of our SEO on title and text. Google "death of the american arcade"-- Joshua Topolsky

HuffPo's solution was to cut the post down to a couple lines of text with a link. The title remained the same, and the story still outranks The Verge's for many obvious search queries, either by way of the Google News module at the top of the results page or directly.

The problem with the post isn't that it exists, or that it "over-aggregated" The Verge. It was a relatively short excerpt with a prominent link. The HuffPo's only visible sin from a blogging perspective was including a "The Verge" byline without the site's permission. Asked why they published the post, HuffPo's senior communications director, Tiffany Guarnaccia, told BuzzFeed: "Just like virtually every other news site, we post linkouts as a service to our readers so they can easily find great stories." Many of these links end up on The Huffington Post's front page, or its vertical pages, which can send stories traffic.

She pointed out that BuzzFeed publishes link out pages too — that is, small stubs of outside stories that exist primarily to link to the source, and to give us a way to link out to other sites using our CMS. This is true.

A prominent Huffington Post link out can drive tens or even hundreds of thousands of clicks, and the site is generous with them. It can rightly claim to be a massive source of traffic for other publishers. But stub stories like this serve other purposes too: They send people to sites in the hope that they'll come back to HuffPo to comment, for example, not unlike Reddit posts; and they provide an illusion of more content on a given vertical page. Most important, they're an apparently deliberate play for Google traffic.

Business Insider reposts links in a similar fashion in order to include them in its front page "river" column. Deputy Editor Nich Carlson explains, "When readers click on the story's headline in our river, they go directly to the original story. Likewise, our CMS automatically puts a link to the original story in our tweets." These posts, like HuffPo's, are a sort of CMS hack, and not really meant to be read on their own.

But BI's stub stories rarely show up in Google and almost never outrank the stories they link to. This is by design: "We put a note for Google in the post's metadata that tells Google to ignore our post, and give the 'juice' to the original story. We do this by noting a canonical link," says Carlson. "It would be a lousy user experience to come to our site from Google and see 'Click here to read the [publication] story,'" he says, noting more generally that it's just "a nice way to treat other publishers."

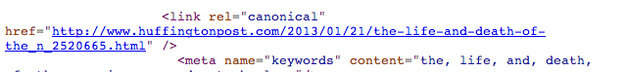

On a typical webpage, canonical links serve a different purpose: They give search engines a primary URL to index, in case the page exists under multiple URLs. This prevents duplication in search results from the same site. For content that's syndicated — or in HuffPo's case, partially syndicated — canonical links can link back to the content's original location, to make sure Google knows where it came from. As of Thursday, HuffPo's stub contains a canonical link — back to itself:

In its response to FWD, The Huffington Post did not address the question "Without Google, would these posts exist?" The lack of a canonical link — and use of the same headline — suggests that they probably wouldn't. Despite ranking higher on Google and much higher on Google News, the HuffPo link has been tweeted just 54 times (mostly in anger). The Verge's story has been tweeted over 2,000 times.

In reality, this isn't very damaging to The Verge — and for the Huffington Post, whose tech section is full of reported original content, it's an etiquette breach at worst (BuzzFeed founder Jonah Peretti also cofounded Hufffington Post and helped create its SEO strategy).

Recently, a story on BuzzFeed, a lengthy exposé on Scientology, was outranked in Google search and Google News by a similar HuffPo stub. At a previous job, my stories were often outranked on Google by their word-for-word reproductions on an Australian franchise. In all these cases, the publishers are partly to blame: By not using HuffPo-style aggressive search engine optimization (for a variety of reasons), The Verge, and others, are leaving traffic on the table.

For Google, this is far more damning: Google is the table. A site should not be able to auto-post a stub of another story and immediately outrank it in the world's most popular and powerful search engine — that is a bug. And on the surface, it seems like an easy one to fix: One story was posted days later, with a small word-for-word excerpt of the other's text. Even to a machine, it seems like it ought to be easy to tell which one of these posts is derivative of the other.

Google's technological reality is probably more complicated that it looks: The Huffington Post's stub text is surrounded by links and tweets and comments, many of which connect to other sites with very good PageRank values. And an algorithm might not have the same ability to quickly intuit that a large page with dozens of elements is really just a tiny repost of another, and that those elements don't add much of value.

But a user shouldn't have to understand that, or care. To a searcher, this is a frustrating glitch, and feels like getting spammed. It feels, more than anything, like Google isn't working, and that search is getting worse. And might lead the user to wonder, if Google hasn't fixed problems this basic yet, will it ever? Could it, if it wanted to?

(Google's head of Webspam and longtime search quality point man Matt Cutts has not responded to a request for comment.)