Not only does your phone know where you are at all times — that's been true for years — it now knows exactly what method of transportation you've chosen to get there.

A new phone bought today can sense if you are walking or running, if you drove to your destination in a car or hopped on a bike. Far better than most pedometers, it can tell you how many steps you've taken and in which direction you went. It knows how long you stayed out at the bar last weekend and how you got home. And it's getting more accurate by the day.

In addition to GPS receivers, modern smartphones contain a variety of tiny motion sensors that assist all kinds of mundane, but essential, tasks. There's a compass, a gyroscope that lets your phone know how you're holding it, and accelerometers to determine relative movement.

But these sensors have just started to reach their potential. The minute movements and rotations of your phone, in conjunction with its awareness of your location, can provide a shockingly complete picture of not just where you are, but what exactly you're doing.

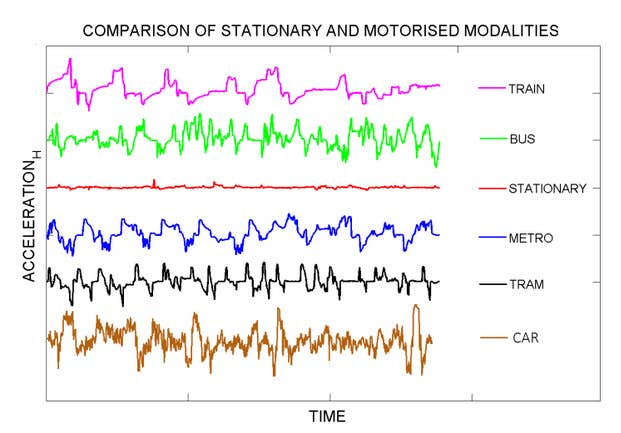

Researchers at the University of Helsinki announced they've developed an algorithm that accurately reveals modes of transportation based solely off of movement data collected from mobile phones. By studying over 150 hours of accelerometer data, the Finnish team found their algorithms have improved transportation mode detection by over 20%.

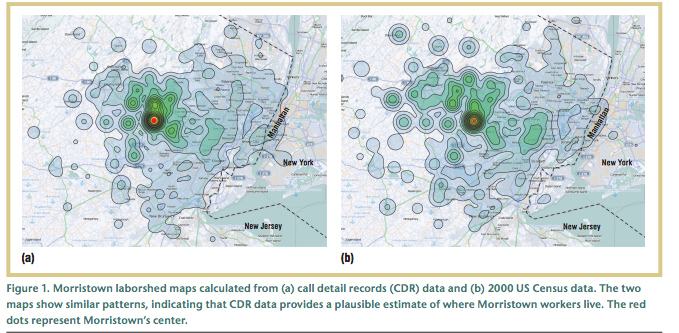

In other, similar research, even the roughest data has painted a remarkably clear picture. A 2011 study by AT&T Labs found that that call detail records (CDRs), which anonymously document the wireless signal of every SMS message and call, in Morristown, N.J., look very similar to U.S. census data of the same area. According to the study:

We can identify patterns of human activity in different parts of a city—a city's lifebeat — by observing cell phone usage in different cell tower antenna coverage regions. By studying these patterns, city officials could potentially model the typical flow of people between different parts of the city over time. Monitoring these patterns might in turn allow the timely detection of anomalies such as dangerous overcrowding surrounding a popular music concert, or following the traffic flow during a weather emergency.

And here's what it looked like:

Now, with Apple's M7 motion-sensing chip — which is standard inside the iPhone 5S and stays alert even when the phone is in standby mode — we're about to see movement data reach new levels of accuracy.

While the most obvious application for this type of movement technology lies within fitness and health apps, which will no doubt see substantial leaps in tracking ability, nonstop measurement via smartphone could have serious implications in areas like city planning, all the way to modern psychology and medicine. As David Talbot noted during the debut of the iPhone 5S, our phones (and eventually our wearable devices) won't take long to recognize our gestures and, quite possibly, even begin to pick up on our emotional patterns.

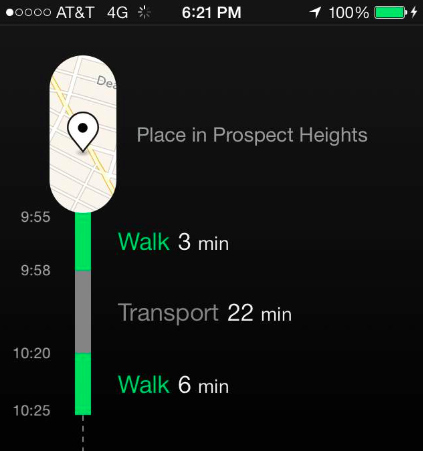

Sampo Karjalainen, the lead designer and CEO of Moves, an activity and fitness tracking app, notes that some in government are paying attention to companies collecting movement data. "We've been approached by some cities who'd like to use the data for city planning purposes," Karjalainen told BuzzFeed. While Moves has decided, for now, to not provide city governments with data — it leaves the decision of donating anonymized data up to the individual user — Karjalainen noted that the future of movement based data is bright.

"There's standard location data for sure, but there are also other kinds of contextual data you can collect as well, like which communication method (text, voice, picture) you used and where, scanning calendar entries and the photos you take. You could use the phone's microphone to listen in on the outside environment while idle and change phone settings and do much more to document different elements of your life," Karjalainen said. "Collecting data from a run or a bike ride is the first step, but then there's collecting the smaller data that allows you to truly understand your habits and make real changes."

For some, a product like Moves will no doubt feel like a serious invasion of personal space and the data it produces the beginning of a disturbing trend. While Karjalainen and Moves are protecting the privacy of its users' data, the decision to share location and movement information is, as always, up to the companies doing the collecting. After using the app for about a week, it was striking to see my data laid out. While not always totally accurate, some things, like my commute, are perfectly documented. And though the information itself is pretty mundane, it's rather unnerving to see a quantified version of your day.

It's equally easy, though, to see how tech companies and techno-utopians will justify collecting and analyzing this data. Just imagine this future scenario: You drive to meet up with friends at a bar. Your phone/smartwatch senses you arrived in a car. After a couple of hours in the location it notices erratic movements and gesticulations out of the ordinary. The conclusion: You've had a couple of drinks and you might be driving soon, so maybe you get a push alert with the number for cab service later on in the evening when you walk out of the door. It's an entirely hypothetical and invasive-sounding scenario, but one that's not far from being plausible — at least from a technological standpoint.

For most health and fitness apps — the ones that have the biggest leg up on monitoring and tracking this kind of data — it's unclear how far they'll push into these more invasive territories. "In the end, we decided only to focus on the most popular activities for fitness, like running, walking, and cycling," Karjalainen said. "We did some experiments collecting transportation data to see if we could distinguish between types internally, and we realized it was not a big priority for us to monitor everything right now." That said, as technology allows, Karjalainen said he plans to broaden Moves' capabilities, which means asking even more of its users. "As we go forward I think we'd be willing to recognize more activities to be able to to tell — in more human terms — what the user did during the day."