Over the past few years, people have had problematic old tweets resurface, and they’ve faced some permanent consequences.

I have made the choice to step down from hosting this year's Oscar's....this is because I do not want to be a distraction on a night that should be celebrated by so many amazing talented artists. I sincerely apologize to the LGBTQ community for my insensitive words from my past.

I’ve seen a few of my old tweets from 7/8 years ago floating around (which I have now deleted) using words like “chav” “skank” and other words I wouldn’t use now as part of my language and lot of them were taken out of context referring to TV shows but I would never say those

Wood says the timing for his app has never been better.

Imagine your kids looking at your old tweets and saying your cancelled as their father

He said: “We’re living in a time where consumers want to own their data. People have been reminded that they will be held accountable in the court of public opinion. There have been constant reminders that what you post online matters and can be costly.”

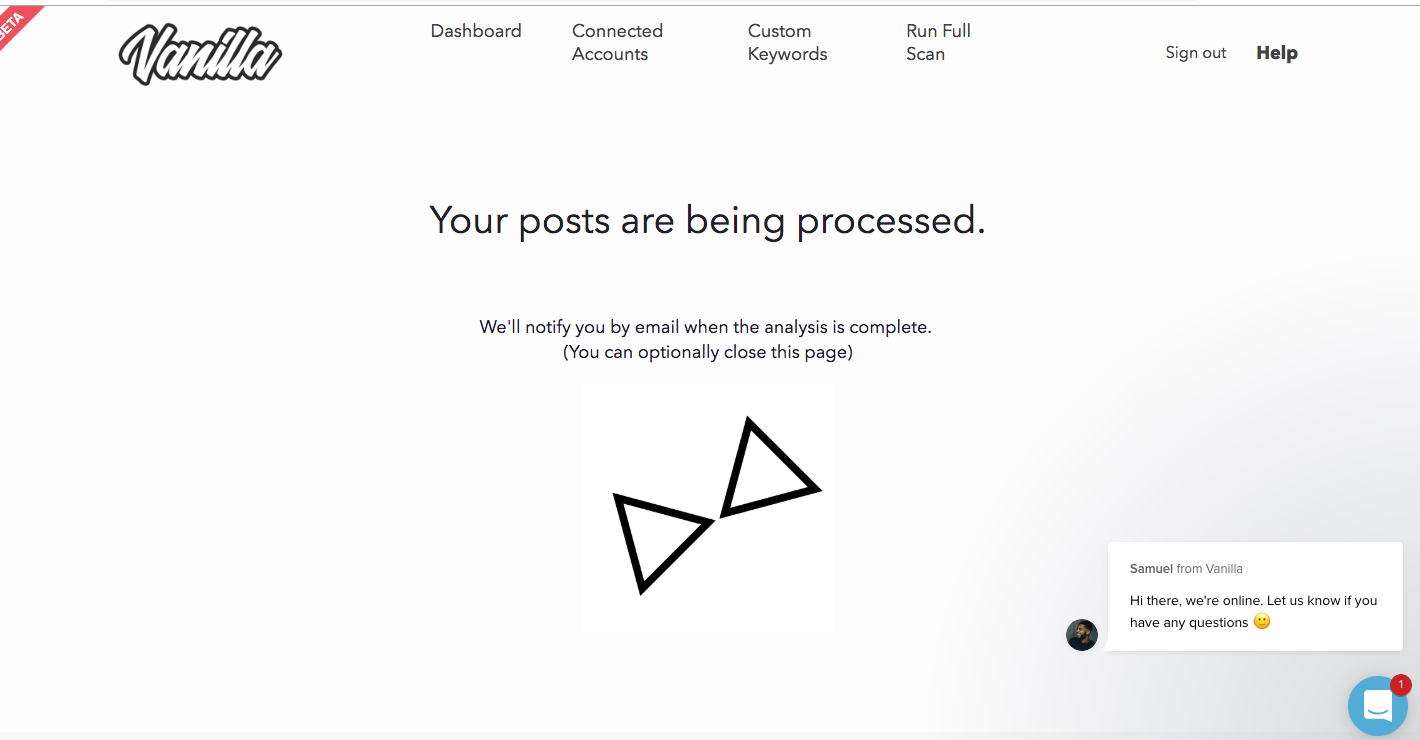

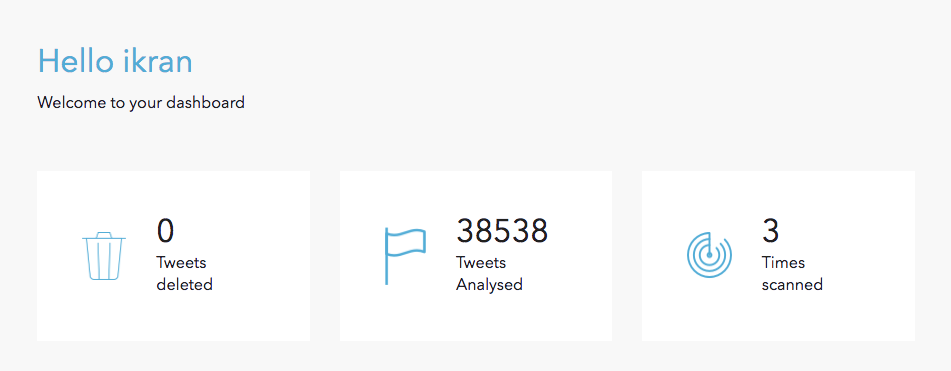

He said that over 1,000,000 tweets have already been scanned through the app, so I decided to scan my Twitter archive.

About a day later, all 38K of my tweets had been scanned by Vanilla.

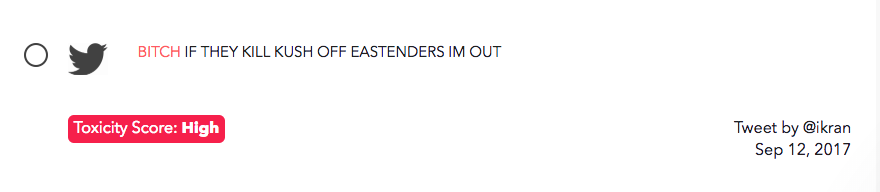

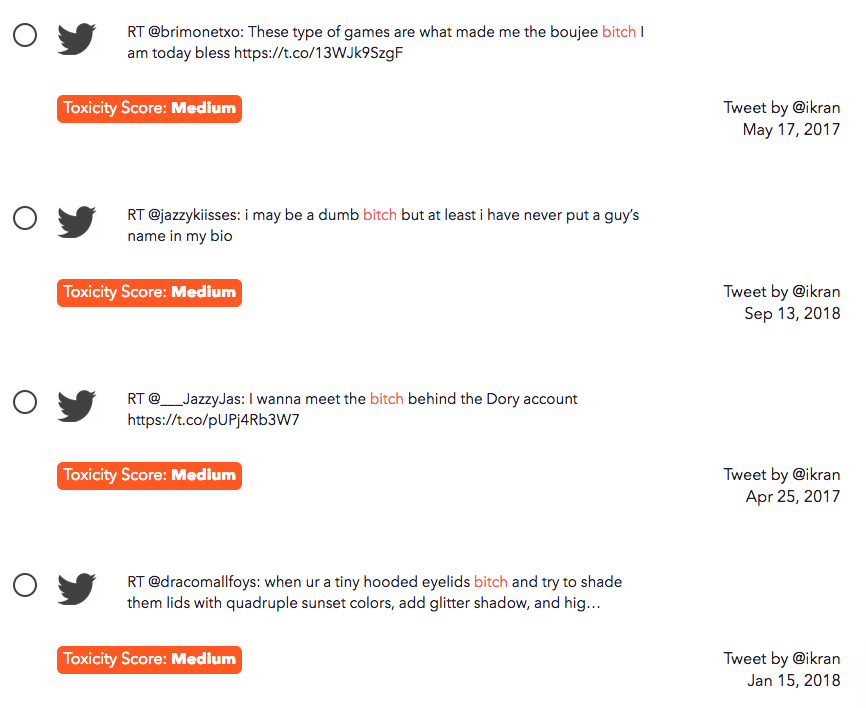

The software flagged some of my tweets with a high “toxicity score”, but they were basically me screaming at TV shows.

In a lot of the tweets the app flagged, if the words were to be used in a different context...they could be problematic.

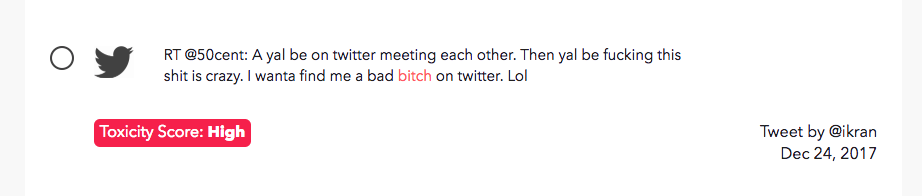

The software also scans retweets, and flagged this funny tweet by 50 Cent.

And this one by Ed Balls tweeting his own name.

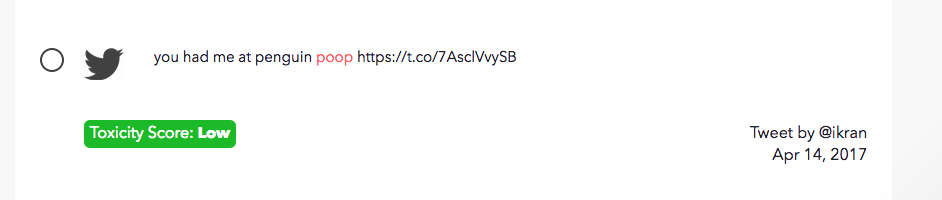

And me saying the word “poop”.