Across YouTube, an unsettling trend has emerged: Accounts are publishing disturbing and exploitative videos aimed at and starring children in compromising, predatory, or creepy situations — and racking up millions of views.

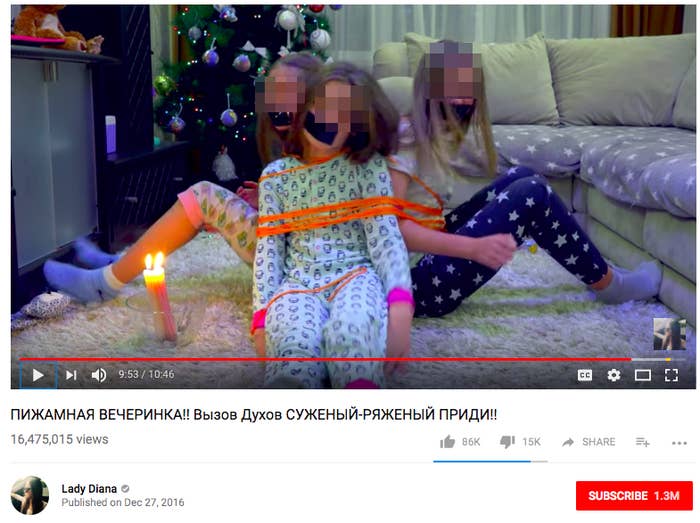

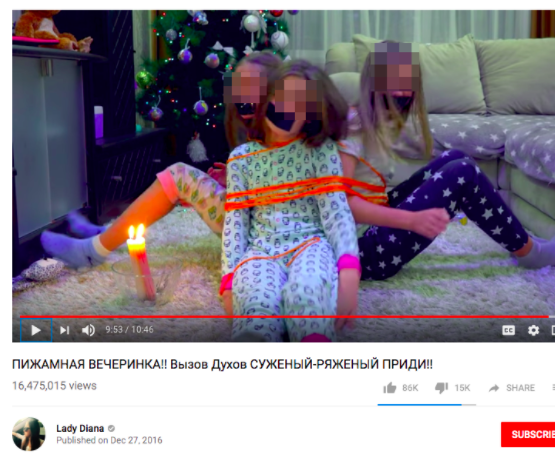

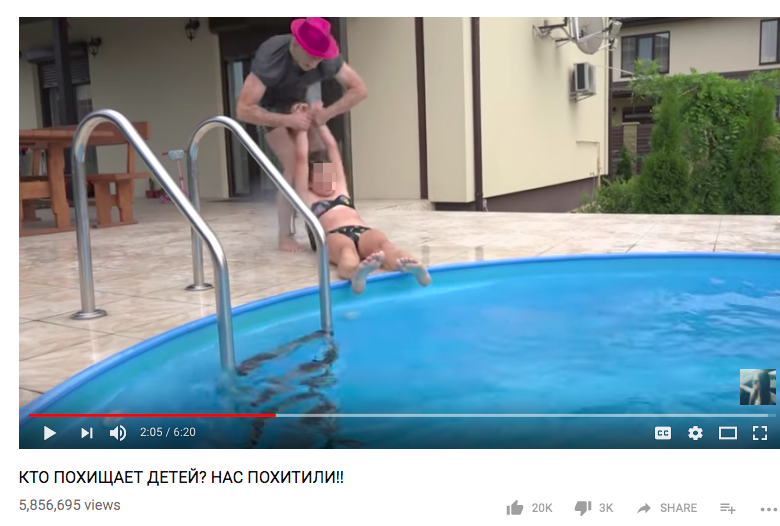

BuzzFeed News has found a number of videos, many of which appear to originate from eastern Europe, that feature young children, often in revealing clothing, placed in vulnerable scenarios. In many instances, they're restrained with ropes or tape and sometimes crying or in visible distress. In other videos, the children are kidnapped, or made to 'play doctor' with an adult. The videos frequently include gross-out themes like injections, eating feces, or needles. Many come from YouTube 'verified' channels and have tens of millions of views. After BuzzFeed News brought these videos to the attention of YouTube, they were removed.

In recent weeks, YouTube has faced criticism after reports about unsettling animated videos and bizarre content, which were aimed at children using family-friendly characters, and some of which was not caught by YouTube Kids’ filters. All of the videos reviewed by BuzzFeed News were live-action, ostensibly set up by adults and featuring children. Taken together, they make up a vast, disturbing, and wildly popular universe of videos that — until recently — existed unmoderated by the platform.

That appears to be changing. On Tuesday afternoon, BuzzFeed News contacted YouTube regarding a number of verified accounts — each with millions of subscribers — with hundreds of disturbing videos showing children in distress. As of Wednesday morning, all the videos provided by BuzzFeed News, as well as the accounts, were suspended for violating YouTube's rules. YouTube told BuzzFeed News that the deletions were part of a scheduled effort to combat this type of content and that some of the accounts and videos provided by BuzzFeed News were already scheduled to be purged.

"In the last week we terminated over 50 channels and have removed thousands of videos under these guidelines," YouTube said in a statement. All of the videos featured below were still up as of Tuesday evening.

According to YouTube, the deletions are part of a bigger effort to weed out exploitative content on the platform. Last week the company terminated ToyFreaks, a massively popular account run by Greg Chism for videos that bordered on child abuse. The account featured videos of Chism's daughters screaming in fear, bathing, pretending to be babies, spitting up food, being force-fed, and 'peeing.' The account had 8 million followers around the time YouTube shut it down.

In a company blog post Wednesday afternoon, YouTube pledged to address the issue comprehensively by fixing gaps in its enforcement policy. Starting today, the company is purging accounts that — like ToyFreaks — appear to show child endangerment. It is also age-restricting cartoons and animated videos that feature kid-friendly characters engaged in bizarre or adult situations.

The company is also cracking down on advertising on videos that feature family entertainment characters engaged in violence. According to YouTube, the company has removed ads on 3 million of these videos since June. The site also plans a new initiative to disable all comments on any videos featuring children in exploitative situations. According to YouTube, if a video featuring children begins to populate with inappropriate comments, the site will disable the comment thread for that video. The company will also be providing guidance for creators who make family-friendly content in the form of some written instructions. Lastly, the company said it would work with child safety experts to continue to identify troubling trends like this one, which the company allowed to thrive on its platform for years. Many of the offending channels were even verified by YouTube — a process that the company says was done automatically as recently as 2016. The company says it has re-evaluated the verification process to add more human oversight.

Before YouTube removed them, these live-action child exploitation videos were rampant and easy to find. What's more, they were allegedly on YouTube's radar: Matan Uziel — a producer and activist who leads Real Women, Real Stories (a platform for women to recount personal stories of trauma, including rape, sexual assault, and sex trafficking) and who provided BuzzFeed News with more than 20 examples of such videos — told BuzzFeed News that he tried multiple times to bring the videos to YouTube's attention and that no substantive action was taken.

On September 22, Uziel sent an email to YouTube CEO Susan Wojcicki and three other Google employees (as well as FBI agents) expressing his concern about "tens of thousands of videos available on YouTube that we know are crafted to serve as eye candy for perverted, creepy adults, online predators to indulge in their child fantasies." According to the email, which was reviewed by BuzzFeed News, Uziel included multiple screenshots of disturbing videos. Uziel also told BuzzFeed News he addressed the concerns about the videos early this fall in a Google Hangout with two Google communications staffers from the United Kingdom, and that Google expressed desire to address the situation. A YouTube spokesperson said that the company has no record of the September 22nd email but told BuzzFeed News that Uziel did email on September 13th with screenshots of offending videos. The company says it removed every video escalated by Uziel.

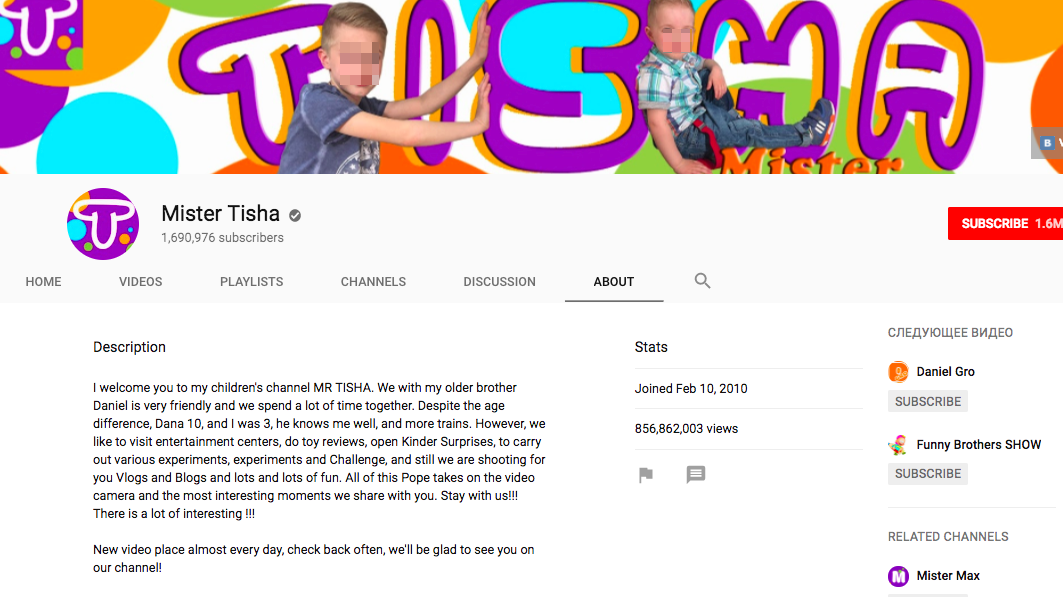

Like ToyFreaks, Mister Tisha, was a verified YouTube account run by a family featuring children as actors. It had 1.6 million subscribers as of Tuesday.

The account was terminated by YouTube shortly after BuzzFeed News contacted YouTube.

A recurring series of videos from Mister Tisha includes the keywords "Bad Baby" and involves a child disobeying an adult. The adult is often seen holding a belt when he discovers the child.

The account was terminated by YouTube shortly after BuzzFeed News contacted YouTube.

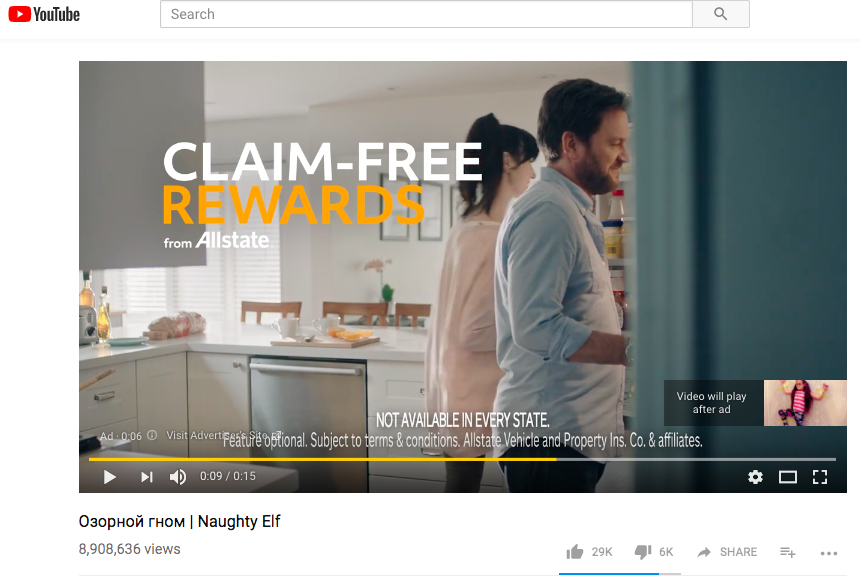

Like similar accounts, Lady Diana's videos appear to be monetized. Multiple videos — including one called "Naughty Elf," which had 8.9 million views and included a still image of a young girl taped to a wall — included programmatic pre-roll advertisements.

Thanks to YouTube's autoplay feature for recommended videos, when users watch one popular disturbing children's video, they're more likely to stumble down an algorithm-powered exploitative video rabbit hole. After BuzzFeed News screened a series of these videos, YouTube began recommending other disturbing videos from popular accounts like ToysToSee.

The autoplay recommendation algorithm populates videos from multiple accounts, including one trope where bugs attack children. One of the videos featured had over 90 million views.

Videos from less popular accounts are frequently more disturbing. A number of videos viewed by BuzzFeed News showed very young children playing doctor with an adult who exposed the upper parts of the child's buttocks in order to mock inject them with a syringe, children pretending to eat feces out of a diaper, and children in bathing suits seemingly being abducted and held under water until unconscious by adults.

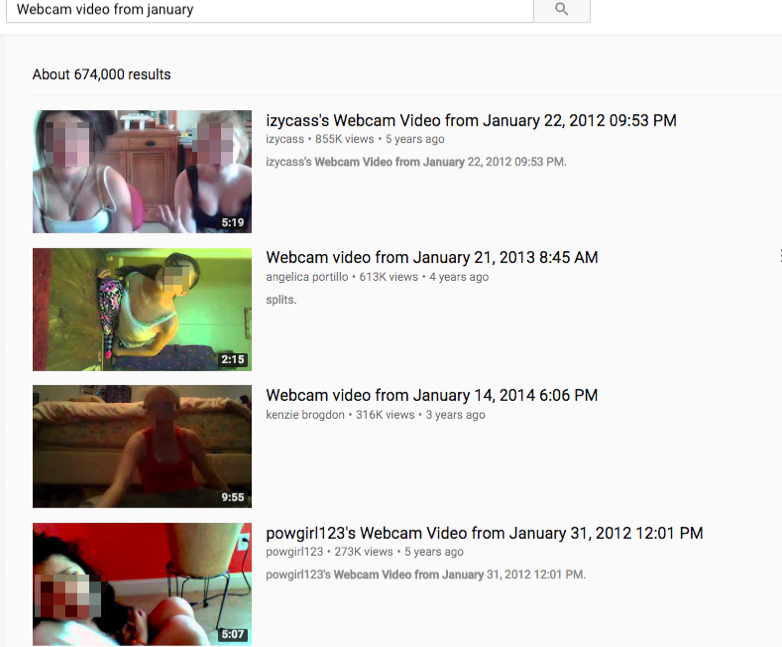

Perhaps most unsettling, however, are a series of webcam videos of young girls which — unlike the ToyFreaks style of videos — do not appear to be aimed at children. These videos come mostly from one-off accounts and show young girls scantily clad talking or singing in front of webcams.

Some of the videos have millions of views. Many have been up for years. This video, for example, was published in September 2012 and features a young girl in a nightgown.

Like many videos in this genre, it features predatory comments.

Both the child actor and webcam videos present a specific challenge to YouTube in its effort to moderate potentially exploitative content on its platforms. While all of the videos are bizarre and disturbing, many are creepy in ways that may be difficult for a moderation algorithm to discern. In some cases, the videos occupy a strange gray area between play acting and truly abusive behavior. Some videos, for example, show children taped to walls while laughing, while other videos of children playing doctor seem to be silly with children who appear to be having fun.

These videos, while still bizarre and disturbing, likely pass through some of YouTube's algorithmic moderation channels. In previous statements to numerous outlets, including BuzzFeed News, YouTube has said that it "will be conducting a broader review of associated content in conjunction with expert Trusted Flaggers." And the recent blog post details significant changes in enforcement on this content.

Still, it's unclear whether these programs are substantial enough to catch and limit the volume of disturbing children's content across the platform. YouTube told BuzzFeed News that it plans to continue to evolve its policies alongside the bad actors who will inevitably attempt to keep posting disturbing content of this nature. While the company noted that this will be an ongoing fight, it suggested that machine learning will play an important role to address the issue at scale.

UPDATE

This post was updated to reflect a statement from YouTube regarding its contact with Matan Uziel.